x-technology

Operating Agent-Based Systems - Overview, Configure, Run, Orchestrate, Monitor

This workshop explores how standalone agents operate at the runtime level and how they differ from traditional AI pipelines. We examine agent architecture, planning loops, memory models, and tool execution. We also cover multi-agent coordination, including state isolation and resource control. A key focus is security and governance — capability-based access, sandboxing, and injection risks. Finally, we address observability and supervision: tracing reasoning, auditing tool usage, and implementing control mechanisms for production systems. All examples and concepts are grounded in the Node.js stack and we explore why Node.js is particularly well-suited for building production-ready agent runtimes — serving as the control plane for supervision, integration, streaming execution, and distributed coordination.

Prerequisites

- Good understanding of JavaScript or TypeScript

- Experience with Node.js and API development

- Basic knowledge of databases and LLMs is helpful but not required

Goals

- Understand AI agent architecture: loop, planning, memory, tools, guardrails

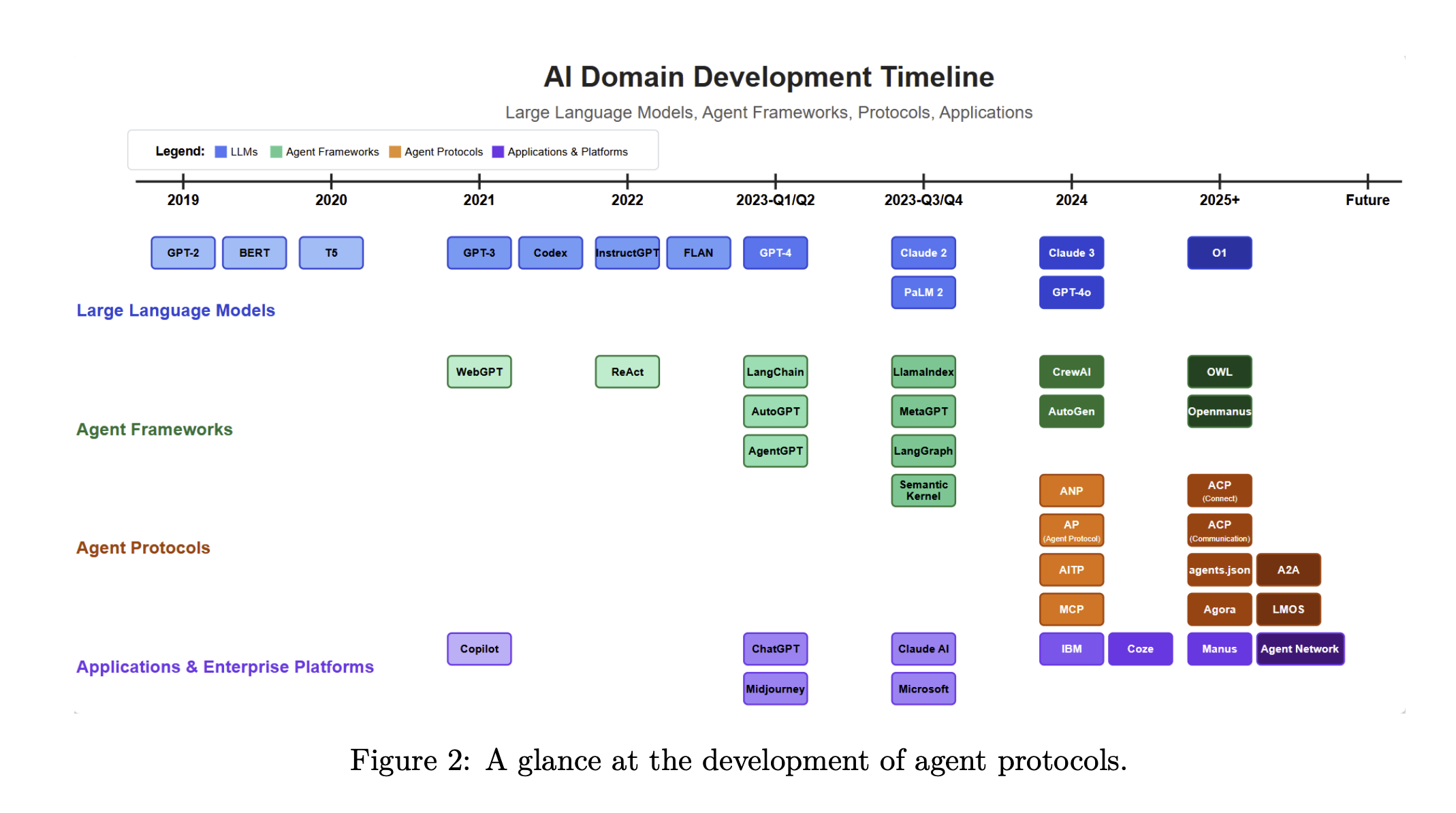

- Compare agent SDKs and protocols (MCP, A2A, ANP)

- Build and orchestrate agents with Node.js as the control plane

- Add security, observability, and n8n integration to production systems

Code

Agenda

- Introduction 📢

- Setup 🛠️

- AI Agents World 🌎

- Demo #1 - Standalone Baseline 👋

- Agents SDK 🧰

- Demo #2 - SDK-Based Agent 🤖

- Agent Protocols 🔗

- Demo #3 - Orchestration 🎻

- Runtime 🔒

- Demo #4 - n8n Integration 🔄

- Demo #5 - Security & Observability 🔍

- Summary 📚

- Feedback 💬

- References 🔗

Introduction

Alex Korzhikov

Software Engineer, Netherlands

My primary interest is self development and craftsmanship. I enjoy exploring technologies, coding open source and enterprise projects, teaching, speaking and writing about programming - JavaScript, Node.js, TypeScript, Go, Java, Docker, Kubernetes, JSON Schema, DevOps, Web Components, Algorithms 🎧 ⚽️ 💻 👋 ☕️ 🌊 🎾

Pavlik Kiselev

Software Engineer, Netherlands

JavaScript developer with full-stack experience and frontend passion. He happily works at ING in a Fraud Prevention department, where helps to protect the finances of the ING customers.

Setup

- Node.js 18+

- OpenAI-compatible API key for live LLM demos is optional

- Gemini / Google API key for ADK demos is optional

- n8n is installed from this repo via

npm install

Project setup:

git clone https://github.com/x-technology/workshop-agents.git

cd workshop-agents

npm install

Optional environment examples:

# Step 01 raw HTTP demo

export OPENAI_API_KEY=...

# optional:

export OPENAI_MODEL=gpt-4.1-mini

export OPENAI_BASE_URL=https://api.openai.com/v1

# Step 02 ADK demo with Gemini

export GOOGLE_API_KEY=...

# optional:

export GEMINI_MODEL=gemini-2.5-flash

# Step 02 ADK demo with OpenAI-compatible provider instead of Gemini

export SDK_PROVIDER=openai

export OPENAI_API_KEY=...

export OPENAI_MODEL=gpt-4o-mini

Default offline-friendly behavior:

npm run start:01falls back to naive keyword routing ifOPENAI_API_KEYis not setnpm run start:02stays on the ADK path and falls back to a local keyword-basedBaseLlmnpm run start:05writes a local JSONL trace tosrc/05-security-observability/trace.jsonl

AI Agents World

| Aspect | Gen AI | AI Agents | Agentic AI |

|---|---|---|---|

| Goal | Generate content / answers | Complete specific tasks | Achieve complex goals autonomously |

| Autonomy | Low | Medium | High |

| Planning | None | Limited | Advanced |

| Tool Use | No | Yes | Yes (dynamic, multi-tool) |

| Memory | Minimal (per session) | Short / long-term | Persistent, contextual |

| Human Input | High (prompt-driven) | Medium (task oversight) | Low (goal-driven) |

| Typical Use | Q&A, summarization, writing | Booking, data fetching, workflows | Research, orchestration, decision-making |

| Example Behavior | Responds to prompts | Executes steps with tools | Plans, adapts, and executes end-to-end tasks |

IDE as a Code Agent

You’re already using one!

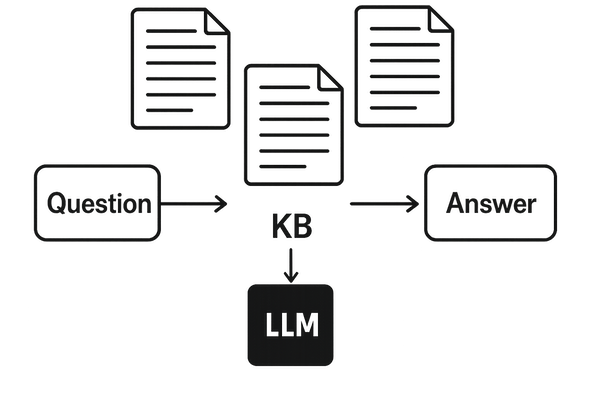

RAG Recap

LLM Agents vs Workflows

- Workflows are systems where LLMs and tools are orchestrated through predefined code paths

AI agents

Systems where LLMs dynamically direct their own processes and tool usage, maintaining control over how they accomplish tasks

A system that autonomously performing tasks on behalf of a user or another system by designing its workflow and utilizing available tools

LLM - Large Language Models trained on tons of sources and materials, having billions of parameters

Loop - Agent’s fundamental core operating system

Planning - task decomposition, multi-plan selection, external module-aided planning, reflection and refinement, memory-augmented planning, evaluation

p = (a0, a1, · · · , at) = plan(E, g; Θ, P).

g0, g1, · · · , gn = decompose(E, g; Θ, P);

pi = (ai0, ai1, · · · aim) = sub-plan(E, gi; Θ, P).

Prompt Architectures - Chain of Thought (CoT), ReAct, PRACT, RAISE, Reflexion, …

// ReAct

while (true) {

const response = await llm(messages, tools);

if (response.tool_call) {

const result = await runTool(response.tool_call);

messages.push(result);

} else {

return response.output;

}

}

// Reflexion

export async function reflexionLoop(task: string) {

let bestAnswer = null;

let bestScore = -Infinity;

for (let i = 0; i < 3; i++) {

console.log(`Attempt ${i + 1}`);

const trajectory = await runAgent(task);

const evaluation = await evaluateTrajectory(task, trajectory);

const reflection = await reflect(task, trajectory, evaluation);

storeReflection(reflection);

console.log("Score:", evaluation.score);

console.log("Lessons:", reflection.lessons);

if (evaluation.score > bestScore) {

bestScore = evaluation.score;

bestAnswer = trajectory.finalAnswer;

}

}

return bestAnswer;

}

Memory - the processes used to gain, store, retain, and later retrieve information. Context engineering, Short-term (trigger, prompt, session) vs long-term (db, rag) memory.

Tools - extend LLM with ability to act outside its context - read data (files, APIs, web), compute (code execution), act (send email, write DB, click UI)

Demo #1 - Standalone Baseline

Goal:

- Show an agent-like flow without any SDK

- Use raw OpenAI-compatible HTTP and strict JSON output

- Keep a naive keyword fallback when no key is configured

Files:

src/01-standalone/run.jssrc/runtime/openai-compatible.jssrc/runtime/tracer.js

Run:

npm run start:01

Examples:

OPENAI_API_KEY=... npm run start:01

STANDALONE_EMAIL_JSON='{"from":"boss@company.com","subject":"Please follow up","body":"Can you schedule a call and send me a summary?"}' npm run start:01

What to point out in the demo:

- no SDK, just

fetch - raw prompt + JSON contract

- local trace output

- fallback still gives deterministic

task | event | no_action

Agents SDK

| Claude Agent SDK | OpenAI Agents SDK | Google ADK | AI SDK Vercel | LangChain | |

|---|---|---|---|---|---|

| Primary purpose | Runtime for Claude-based agents with tool use + MCP | Build multi-step agents on OpenAI APIs | Build agents on Gemini / Vertex AI | Fullstack AI toolkit (not agent-first) | Composable chains, agent flows |

| Languages | TypeScript, Python ⚠️ (Python partial) | TypeScript, Python | Python, TypeScript, Go, and Java | TypeScript / JavaScript | Python, TypeScript |

| Model support | Claude only | OpenAI (⚠️ LiteLLM workaround) | Model-agnostic | Model-agnostic | Model-agnostic |

| Orchestration & Multi-agent | Subagents, tool loops, hooks; basic multi-agent orchestration | Agents + handoffs; native multi-agent support | Pipelines (seq/parallel); A2A protocol (early stage) | Tool-based loops, limited multi-agent | Chains, agent executors |

| Loop control | ⚠️ Hooks into steps, loop is internal | ❌ Hidden — tools + instructions only | ⚠️ Orchestration-based, not loop-level | ❌ Loop is internal | Partial via chains |

| Tools | MCP, bash, browser, file system | Function calling, tools, MCP | Google tools + functions ⚠️ (MCP maturity?) | Tool calling, MCP | Tool calling, MCP |

| Memory | CLAUDE.md + runtime context ⚠️ (not true long-term memory) | Threads + state | Vertex memory ⚠️ (needs validation depth) | Per-request (stateless by default) | Buffers + vector DB |

| Best fit | Tool-heavy automation agents | Fast production agents | Google ecosystem | AI web apps | Flexible agent flows, prototyping |

import { FunctionTool, LlmAgent } from "@google/adk";

import { z } from "zod";

/* Mock tool implementation */

const getCurrentTime = new FunctionTool({

name: "get_current_time",

description: "Returns the current time in a specified city.",

parameters: z.object({

city: z

.string()

.describe("The name of the city for which to retrieve the current time."),

}),

execute: ({ city }) => {

return {

status: "success",

report: `The current time in ${city} is 10:30 AM`,

};

},

});

export const rootAgent = new LlmAgent({

name: "hello_time_agent",

model: "gemini-flash-latest",

description: "Tells the current time in a specified city.",

instruction: `You are a helpful assistant that tells the current time in a city.

Use the 'getCurrentTime' tool for this purpose.`,

tools: [getCurrentTime],

});

Key features:

- Agent loop

- Model-agnostic

- Deployment-agnostic

- Built-in tools - file operations, web Search, execution. Compare claude VS openai

Demo #2 - SDK-Based Agent

Goal:

- Replace ad-hoc HTTP logic with reusable ADK agents

- Show three agent roles:

- classify email

- create task simulation

- create agenda item simulation

Files:

src/02-sdk/run.jssrc/02-sdk/agents.jssrc/02-sdk/adk-runner.jssrc/02-sdk/model-resolver.js

Run:

npm run start:02

Examples:

GOOGLE_API_KEY=... npm run start:02

SDK_PROVIDER=openai OPENAI_API_KEY=... npm run start:02

npm run start:02

What to point out in the demo:

- same behavior stays runnable without credentials

- step

02is now the reusable agent catalog for later steps - output is split into

classificationand specialistresult

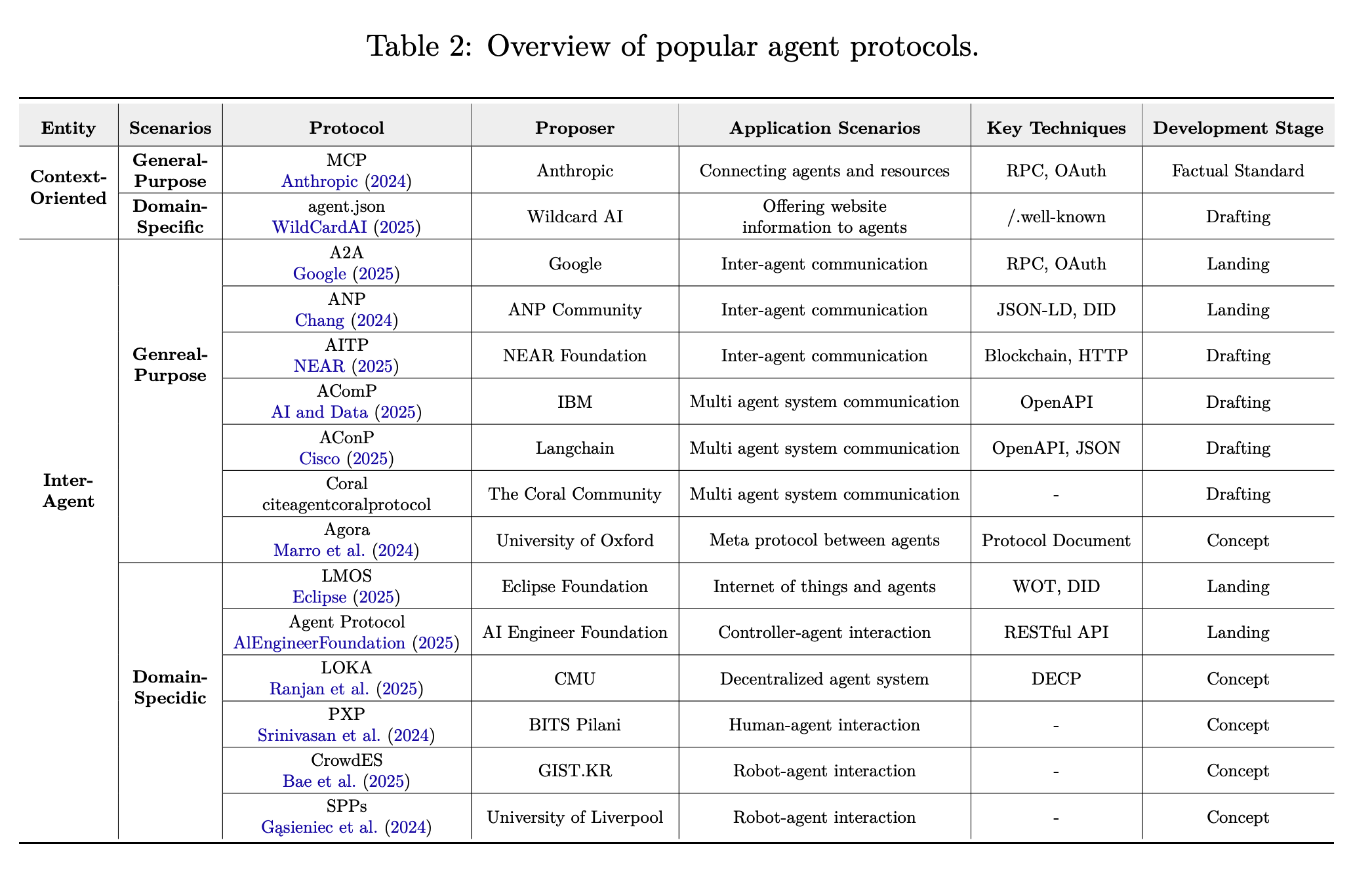

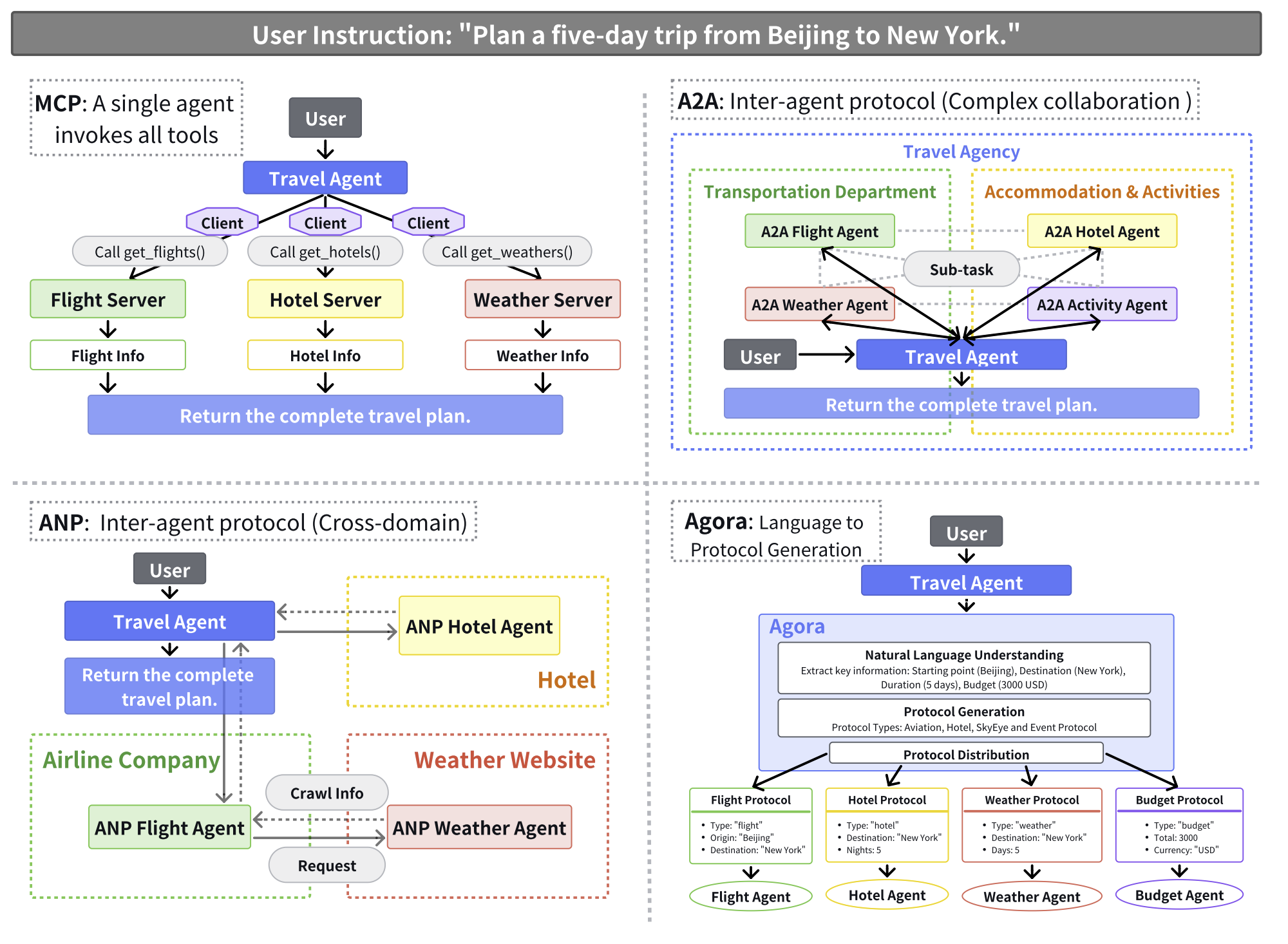

Agent Protocols

Agentic AI - systems composed of multiple co-ordinated AI agents that can break down tasks, collaborate, and pursue complex objectives autonomously over extended periods.

Agent protocols are standardized frameworks that define the rules, formats, and procedures for structured communication among agents and between agents and external systems (c)

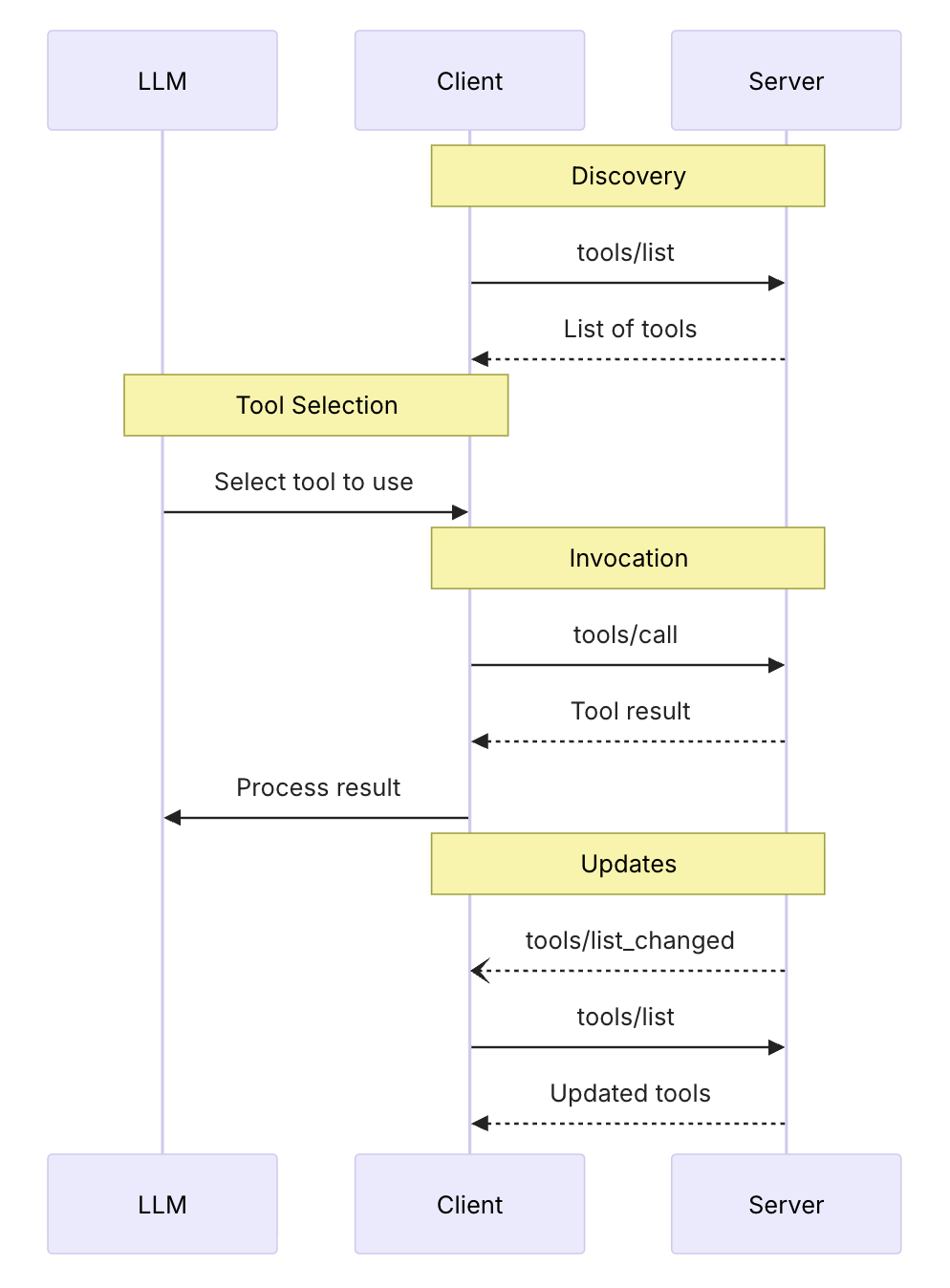

MCP - Model Context Protocol

Anthropic, November 2024, Specification based on the Function calling flow

for (const toolCall of response.output) {

if (toolCall.type !== "function_call") {

continue;

}

const name = toolCall.name;

const args = JSON.parse(toolCall.arguments);

const result = callFunction(name, args);

input.push({

type: "function_call_output",

call_id: toolCall.call_id,

output: result.toString(),

});

}

MCP provides a standardized way for applications to:

- Share contextual information with language models

- Expose tools and capabilities to AI systems

- Build composable integrations and workflows

- Resources: Context and data, for the user or the AI model to use

- Prompts: Templated messages and workflows for users

- Tools: Functions for the AI model to execute

- Communication Layer: Authentication, Notifications, JSON-RPC

- Servers

{

"name": "get_weather_data",

"title": "Weather Data Retriever",

"description": "Get current weather data for a location",

"inputSchema": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "City name or zip code"

}

},

"required": ["location"]

},

"outputSchema": {

"type": "object",

"properties": {

"temperature": {

"type": "number",

"description": "Temperature in celsius"

},

"conditions": {

"type": "string",

"description": "Weather conditions description"

},

"humidity": {

"type": "number",

"description": "Humidity percentage"

}

},

"required": ["temperature", "conditions", "humidity"]

}

}

Agent-to-Agent (A2A) - Inter-Agent Protocol, April 2025

](https://a2a-protocol.org/latest/assets/agentic-stack.png)

Concepts:

- Agent Card - JSON document describing an agent’s abilities & requirements. Enables clients to discover agents and understand how to interact with them effectively.

const movieAgentCard: AgentCard = {

name: "Movie Agent",

description:

"An agent that can answer questions about movies and actors using TMDB.",

// Adjust the base URL and port as needed. /a2a is the default base in A2AExpressApp

url: "http://localhost:41241/", // Example: if baseUrl in A2AExpressApp

provider: {

organization: "A2A Samples",

url: "https://example.com/a2a-samples", // Added provider URL

},

version: "0.0.2", // Incremented version

capabilities: {

streaming: true, // The new framework supports streaming

pushNotifications: false, // Assuming not implemented for this agent yet

stateTransitionHistory: true, // Agent uses history

},

securitySchemes: undefined, // Or define actual security schemes if any

security: undefined,

defaultInputModes: ["text"],

defaultOutputModes: ["text", "task-status"], // task-status is a common output mode

skills: [

{

id: "general_movie_chat",

name: "General Movie Chat",

description:

"Answer general questions or chat about movies, actors, directors.",

tags: ["movies", "actors", "directors"],

examples: [

"Tell me about the plot of Inception.",

"Recommend a good sci-fi movie.",

"Who directed The Matrix?",

"What other movies has Scarlett Johansson been in?",

"Find action movies starring Keanu Reeves",

"Which came out first, Jurassic Park or Terminator 2?",

],

inputModes: ["text"], // Explicitly defining for skill

outputModes: ["text", "task-status"], // Explicitly defining for skill

},

],

supportsAuthenticatedExtendedCard: false,

};

- Message - Communication between a client and an agent, containing content and a role (“user” or “agent”). Contains instructions, context, questions, answers, or status updates that are not necessarily formal artifacts.

{

"jsonrpc": "2.0",

"id": "req-001",

"method": "SendMessage",

"params": {

"message": {

"role": "user",

"parts": [

{

"text": "Generate an image of a sailboat on the ocean."

}

],

"messageId": "msg-user-001"

}

}

}

- Task - Unit of work initiated by an agent, with a unique ID and defined lifecycle.

- Artifact - Output generated by an agent during a task.

{

"jsonrpc": "2.0",

"id": "req-001",

"result": {

"task": {

"id": "task-boat-gen-123",

"contextId": "ctx-conversation-abc",

"status": {

"state": "TASK_STATE_COMPLETED"

},

"artifacts": [

{

"artifactId": "artifact-boat-v1-xyz",

"name": "sailboat_image.png",

"description": "A generated image of a sailboat on the ocean.",

"parts": [

{

"filename": "sailboat_image.png",

"mediaType": "image/png",

"raw": "base64_encoded_png_data_of_a_sailboat"

}

]

}

]

}

}

}

-

Part - Holds one of: text content, a file reference (URL or inline bytes), or structured data in messages and artifacts.

- Direct vs Decentralized Orhestration

- Router

- Service Discovery

- Registry

- Local A2A example

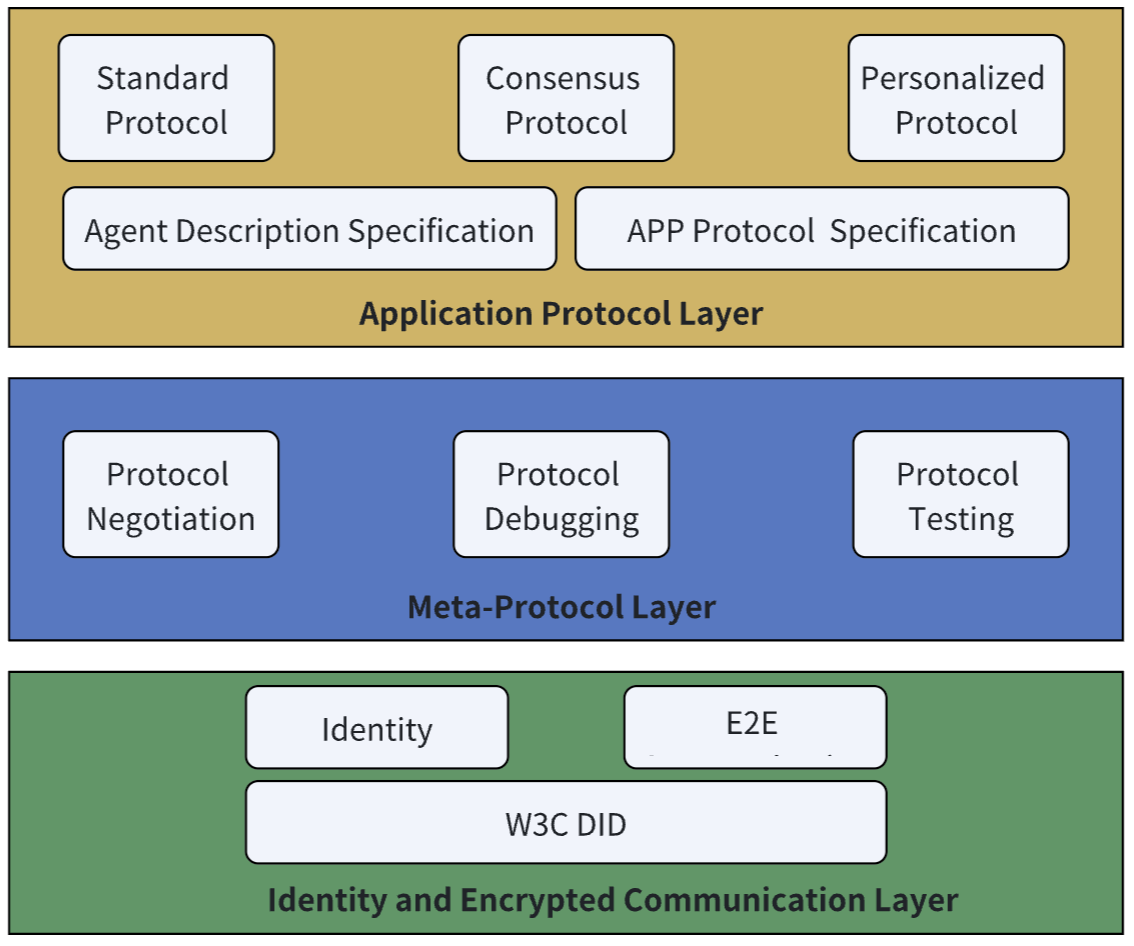

ANP - Agent Network Protocol - Defines how agents connect with each other in an open, secure, and efficient collaboration network

- Peer-to-peer architecture

- Agent discovery

Demo #3 - Orchestration

Goal:

- Reuse the step

02agents from one orchestration file - Show the “monolith” analogy: routing and dispatch are easy to start, but pile up in one place

Files:

src/03-orchestrator/run.jssrc/03-orchestrator/email-router.js

Run:

npm run start:03

Example:

ORCHESTRATOR_EMAIL_JSON='{"from":"hr@company.com","subject":"Team sync invitation","body":"Calendar invite for tomorrow at 10:00"}' npm run start:03

What to point out in the demo:

03does not invent new agents- it composes

classifyEmailAgent,createTaskAgent, andcreateAgendaItemAgent - good for understanding orchestration, bad for long-term maintainability

Runtime

- Isolation

- Least privilege

- Tools have permission settings to allow, block, or prompt the user for approval

- Limit file, network, credentials access with proxy

options: {

allowedTools: ["Read", "Glob", "Grep"],

permissionMode: "acceptEdits",

continue: true

},

- Defense in depth

- Security Schemes - Authentication options

- Contract-first tools

- Code and Web responses auto-checks

- Guardrails - Control your model and tool calls with built-in, custom or external hooks

- Human-in-the-Loop

- Decrease temperature and context

- Define budget

docker run \

--cap-drop ALL \

--security-opt no-new-privileges \

--security-opt seccomp=/path/to/seccomp-profile.json \

--read-only \

--tmpfs /tmp:rw,noexec,nosuid,size=100m \

--tmpfs /home/agent:rw,noexec,nosuid,size=500m \

--network none \

--memory 2g \

--cpus 2 \

--pids-limit 100 \

--user 1000:1000 \

-v /path/to/code:/workspace:ro \

-v /var/run/proxy.sock:/var/run/proxy.sock:ro \

agent-image

- Observability - Tracing and Logging

Infrastructure Frameworks

| n8n | CrewAI | MetaGPT | LangGraph | OpenClaw | |

|---|---|---|---|---|---|

| Purpose | Workflow automation platform | Multi-agent framework | Multi-agent meta-framework | Stateful agent graphs & DAGs | Agent orchestration & deployment |

| Orchestration style | Visual workflow DAG | Role-based agent crews | Role-based SOPs & pipelines | Full DAG with cycles & state machines | Graph-based agent routing |

| Hosting | Self-hosted / cloud | Self-hosted / cloud | Self-hosted | Self-hosted / cloud | Self-hosted / cloud |

| Agent integration | Custom nodes, webhooks | Python-native | Python-native | Model-agnostic (Python/TypeScript) | API-first |

| Use case | Connect agents to business workflows | Collaborative task agents | Complex software development tasks | Complex agent systems with full state control | Production agent deployment |

| Language | JavaScript / TypeScript | Python | Python | Python / TypeScript | JavaScript / TypeScript |

Demo #4 - n8n Integration

Goal:

- Expose the same agent boundaries as visual workflow nodes

- Let n8n handle branching instead of hardcoding it in one file

Files:

src/04-n8n/README.mdsrc/04-n8n/EmailClassification.node.jssrc/04-n8n/TaskSimulation.node.jssrc/04-n8n/AgendaSimulation.node.jssrc/04-n8n/EmailRouter.node.js

Run:

npm run start:04

npm run start:n8n

Suggested workflow:

Email Classification AgentIF/Switchonclassification.categoryTask Simulation AgentfortaskAgenda Simulation Agentforevent- Optional

Email Routernode as a shortcut for the step03monolith behavior

What to point out in the demo:

- step

04reuses step02agents directly - n8n becomes the orchestration layer

Agent Reliability Monitorbelongs conceptually to the step05story

Demo #5 - Security & Observability

Goal:

- Add guardrails and monitoring on top of existing agents

- Keep those concerns separate from routing/orchestration logic

Files:

src/05-security-observability/observability.jssrc/05-security-observability/run.js

Run:

npm run start:05

What the script demonstrates:

- wraps

classifyEmailAgent,createTaskAgent, andcreateAgendaItemAgent - blocks simple prompt-injection attempts

- writes success/failure events into

trace.jsonl - summarizes per-agent reliability from the current run

What to point out in the demo:

- wrappers are composable

- observability is reduced to success/error rate on purpose

- the reliability node in n8n only reads this trace, it does not create it

Summary

An agent runtime is a control plane — coordination, policy, memory, and tooling around an LLM. Node.js fits well as that plane, and SDKs plus protocols (MCP, A2A) give you the building blocks. Production readiness comes from structured outputs, guardrails, tracing, and connecting it all to automation tools like n8n.

What’s coming in next years?

Feedback

Please share your feedback on the workshop. Thank you and have a great coding!

If you like the workshop, you can become our patron, yay! 🙏

References

- Building effective agents - Engineering at Anthropic Dec 19, 2024

- LLM Zoomcamp - A Free Course on Real-Life Applications of LLMs

- A Survey of AI Agent Protocols

- Lilian Weng - LLM Powered Autonomous Agents

- Awesome AI Agent Protocols

- Understanding the planning of LLM agents: A survey, 5 Feb 2024

- A2A: The Agent2Agent Protocol - DeepLearning.ai

- A2A and MCP: Detailed Comparison

Technologies

- LLM

- Langchain

- RAG

- AI Agents

- MCP

- OpenClaw

- n8n